ON THE ROAD TO THE PUBLIC SEISMIC NETWORK |

Edward Cranswick |

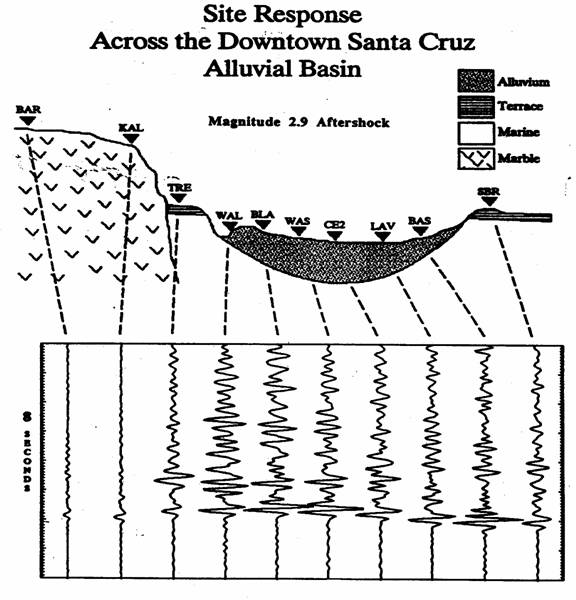

“I gotta get down to the earthquake office at the U.S. Geological Survey in Menlo Park and talk to those guys about making a cheap seismograph that we could sell to the yuppies,” she said as she snuffed out the joint she had been smoking and got into my car. “I live up here in the woods on a commune that is turning into an old age home for hippies; you can’t spend all your time relating to trees. We got to do something we can make some money from, and we could sell seismographs to all the people who keep feeling the earthquakes up here in Northern California.” “Well, you’re in the right place because I work for the USGS in Menlo Park, and I study earthquakes,” I responded. It was a sunny Sunday in May 1985. I was driving south on Route 101, and I had stopped to pick up the hitchhiker just south of Eureka. She was barefoot and wearing a purple hippie dress with beads, et cetera, and her demeanor displayed an intimacy with the years since the sixties — perhaps in her middle fifties — but she was lean, fit and attractive, and her spirit was alive. I was returning to the Bay Area after having spent a couple of days visiting an old friend of mine — we had gone to the 1969 Woodstock concert together — who lived on a commune in the Klamath Mountains. After a decade of studying earthquakes with portable seismographs, this was my first intimation that the general public might wish to participate in what I had regarded as an arcane activity that separated its practitioners from the masses. We all feel the Earth move, but you need a seismologist to explain it to you. In the next five years, several technical developments and personal experiences would make me focus on the idea of a people’s seismograph and the related notion, “You don't need a weatherman to know which way the wind blows,” (Bob Dylan, 1965). But first a little history. I have since discovered that in every generation, there is at least one seismologist who believes that the technology of his time has developed sufficiently to allow for the mass production and distribution of some form of seismograph. It is imagined that these instruments would provide the high density of observations necessary to quantify hazardous ground motions for insurance purposes (Jaeger, 1929), to advance the science of strong ground motion (Richter, 1958), or to familiarize the public with seismology and involve them in the reduction earthquake hazards (Lahr, 1978). I will summarize the factors in my background that also led me to this conclusion. As a seismology graduate student in the 1970’s, I used “Big Drum” portable seismographs to record microearthquakes (smaller than magnitude 3) in New York State. “Big Drums” were analog electronic seismographs made portable by the transistor technology of the 1960’s, and they recorded seismograms on smoked paper wrapped around “big” helicorder drums. By the early 1980’s, as an employee of the USGS, I used portable autonomous digital seismographs (PADS) to record larger earthquakes in California. Digital seismographs require computers and software to analyze their records, and because little software then existed for that purpose, I spent a lot of time writing computer programs. In 1987, as a consequence of a trip to Telluride, Colorado, to attend a Grateful Dead concert, I met Robert Banfill who introduced me to the IBM-PC-compatible personal computer. I had been working with computers since 1967 when I was sixteen years old (the first was an IBM 360/30), and for me, the computer was an entity that resided in the temple of Science, protected from the analog masses by its digital High Priests. Robert, who had left school at the age of twelve and grown up in the desert of southern Utah, had built his first computer from a kit, a TRS-80, at the age of seventeen. It was a revelation to me that Robert could do more on his 286 PC than I could do on the VAX minicomputer at my new USGS office in Golden, Colorado. A year later, I helped Robert to obtain a USGS contract to begin porting my seismic processing software onto PCs. In 1988, I was a member of the USGS team invited by the USSR to assist in the aftershock study of the devastating 7 December 1988 Spitak Earthquake that killed more than 25,000 people in the then Soviet Republic of Armenia. For the first time, I really experienced the horrific effects of strong ground motions on weak buildings. I began to comprehend the impact of such an earthquake on the populace as a whole. We also had many difficulties attempting to use 9-track tape to transfer the Armenian digital seismograms we had recorded to our Soviet counterparts. However, knowing that there were relatively many PCs in the USSR, I used my own money to pay Robert to help me prepare a PC version of the seismic analysis software and the Spitak dataset that was distributed in the USSR. Based on the successful outcome of that project, Robert was awarded another USGS contract to make more of my software PC-compatible in preparation for additional work in Armenia. In September-October 1989, Robert and I were in Golden, Colorado, working frantically on the software conversion for a second Armenia field program that was planned for early November. We were scheduled to depart by car for the USGS, Menlo Park, California on Wednesday morning, 16 October, to work with other USGS members of the Armenia project, but on Tuesday evening, the Loma Prieta Earthquake occurred in the Santa Cruz Mountains 60 miles south of San Francisco. So instead, we left that night and drove to California in the next 24 hours. In the ensuing two weeks, using PCs we rented from a local computer store, we set up and operated a PADS data processing center at a motel in Santa Cruz to process the aftershock data recorded by the PADS that the USGS team from Golden were operating in town. At the end of that period, based on that field processing, we faxed a figure to the USGS coordinator of the aftershock studies which included the relevant geological and seismological data necessary to illustrate and explain the site response associated with damaged sections of downtown Santa Cruz (see figure below). In the course of three field trips to Armenia in 1990, I participated in the USGS project that installed and operated a small-aperture seismic array at the Garni Geophysical Observatory that was designed to monitor Soviet underground nuclear explosions. The Garni array project used PCs for both data acquisition and analysis. Near the end of the third field trip in late November, I remember sitting in the unheated and cold kitchen of the Observatory guest house and lamenting to my Armenian colleague, Vigen, that although we had not finished the work on the array, I had to leave because in two weeks Robert and I were scheduled to make a presentation at annual meeting of the American Geophysical Union (AGU) in San Francisco. The presentation was entitled, "Proposal for a High-density People's Seismograph Array Based on Home Computers in the San Francisco Bay Area". Having seen the devastation caused by the Spitak Earthquake and the large variation of damage over short distances in Santa Cruz, I realized that for site-response research and earthquake hazard assessment, we needed to deploy dense seismograph arrays within the densely inhabited metropolitan areas built on sedimentary basins. Such arrays would be prohibitively expensive if implemented using traditional methods, but would be economical with new approaches to the three requirements of data acquisition: 1) sites; 2) instrumentation; 3) data management. This meant, most importantly, that the PC could do for seismology what it had done for computers: make seismology a public activity. The public could use PCs to record the ground motions right where they lived and use PCs to exchange these records with each other, and thus compare the amount of shaking they experienced. This could generate a community that was joined together by both the computer and the Earth. (see appended abstract) |

|